- #WINDOWS SPARK INSTALL HOW TO#

- #WINDOWS SPARK INSTALL INSTALL#

- #WINDOWS SPARK INSTALL CODE#

- #WINDOWS SPARK INSTALL FREE#

Until then, we appreciate your patience in performing some of the steps manually.īuild the worker: cd C:\github\dotnet-spark\src\csharp\\ĭotnet publish -f netcoreapp3.1 -r win-圆4

Sample console output: Directory: C:\github\dotnet-spark\artifacts\bin\\Debug\net461 Once the build is successful, you will see the appropriate binaries produced in the output directory.

#WINDOWS SPARK INSTALL CODE#

If you want, you can write your own code in the project (the 'input_file.json' in this example is a json file with the data you want to create the dataframe with): // Instantiate a sessionĭataFrame df = spark.Read().Json(input_file.json) Open src\csharp\ in Visual Studio and build the project under the examples folder (this will in turn build the. These steps will help in understanding the overall building process for any.

#WINDOWS SPARK INSTALL HOW TO#

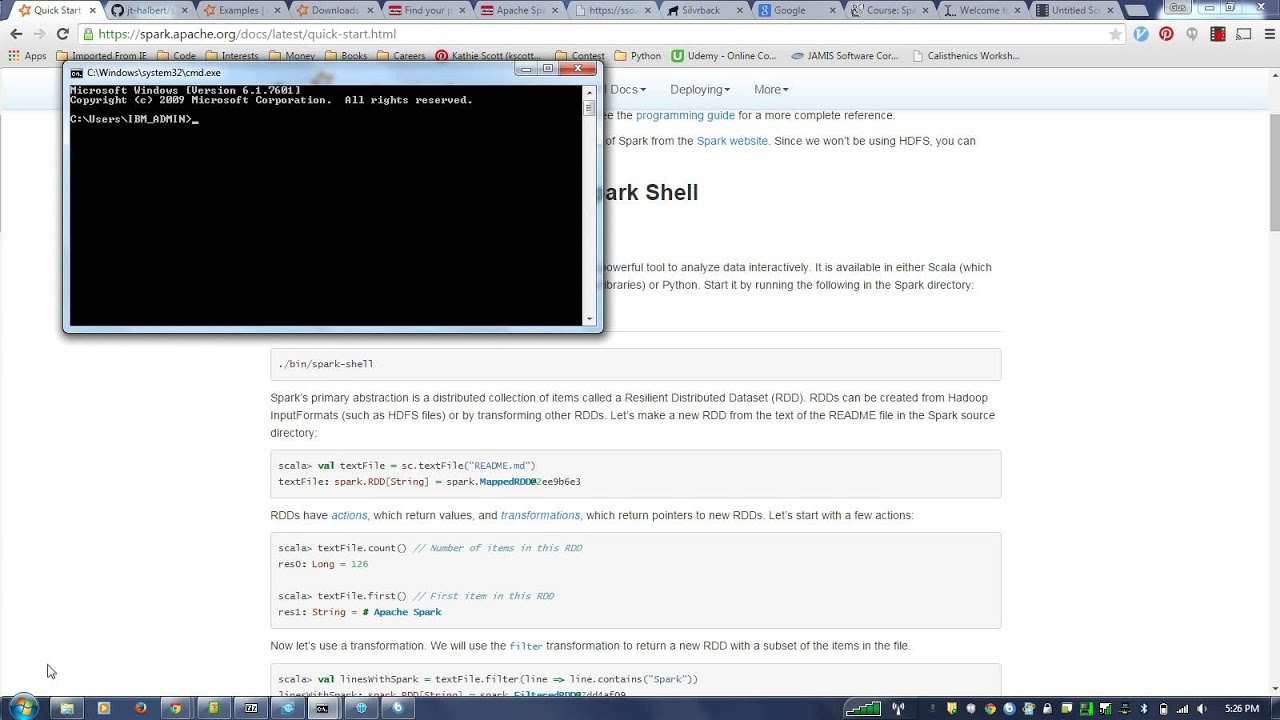

This section explains how to build the sample applications for. You should see JARs created for the supported Spark versions: NET for Apache Spark Scala extension layer: cd src\scala NET for Apache Spark has the necessary logic written in Scala that informs Apache Spark how to handle your requests (for example, request to create a new Spark Session, request to transfer data from. NET for Apache Spark Scala extensions layer You can choose any location for the cloned repository. NET for Apache Spark repository into your machine. Buildįor the remainder of this guide, you will need to have cloned the.

#WINDOWS SPARK INSTALL FREE#

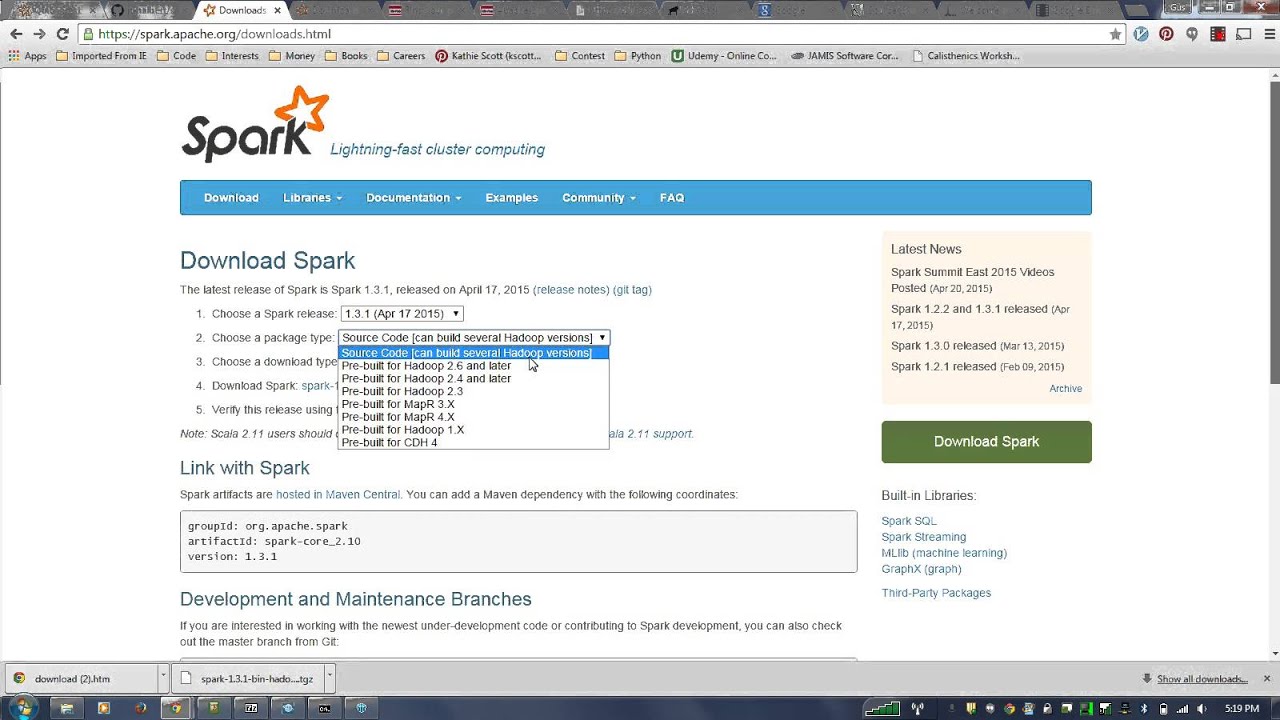

Feel there is a better way? Open an issue and feel free to contribute.Ī new instance of the command line may be required if any environment variables were updated. Make sure you are able to run dotnet, java, mvn, spark-shell from your command line before you move to the next section. For instance, using command line: set PATH=%HADOOP_HOME%\bin %PATH% Set PATH environment variable to include %HADOOP_HOME%\bin. For instance, using command-line: set HADOOP_HOME=C:\hadoop Set HADOOP_HOME to reflect the directory with winutils.exe (without bin). Save winutils.exe binary to a directory of your choice. For example, use hadoop-2.7.1 for Spark 3.0.1. You should select the version of Hadoop the Spark distribution was compiled with. Res0: = WinUtils.ĭownload winutils.exe binary from WinUtils repository. Type in expressions to have them evaluated. Using Scala version 2.12.10 (Java HotSpot(TM) 64-Bit Server VM, Java 1.8.0_201) Verify you are able to run spark-shell from your command-line. set SPARK_HOME=C:\bin\spark-3.0.1-bin-hadoop2.7\Īdd Apache Spark to your PATH environment variable.